A New Language Model Technology

Google introduced a breakthrough technological know-how termed Tranquil that speeds up big language styles (like GPT-3 and LaMDA) devoid of compromising overall performance concentrations.

Bigger Training Facts Is Superior But Comes With a Price tag

Big Language Styles (LLMs) practice on huge quantities of knowledge.

Education the language products on greater quantities of data benefits in the design finding out new capabilities that aren’t generally planned for.

For illustration, introducing much more training facts to a language design can unexpectedly outcome in it getting the capability to translate concerning diverse languages, even even though it was not skilled to do that.

These new qualities are known as emergent capabilities, talents that are not necessarily planned for.

A various research paper (PDF) about emergent capabilities states:

“Although there are dozens of examples of emergent talents, there are presently handful of powerful explanations for why this sort of qualities emerge in the way they do.”

They just cannot clarify why various qualities are learned.

But it is very well recognised that scaling up the quantity of info for coaching the machine permits it to get a lot more skills.

The downside of scaling up the schooling information is that it can take a lot more computational electric power to produce an output, which will make the AI slower at the time it is generating a textual content output (a instant that is called the “inference time”).

So the trade-off with producing an AI smarter with more facts is that the AI also results in being slower at inference time.

Google’s new investigate paper (Self-confident Adaptive Language Modeling PDF) describes the problem like this:

“Recent advancements in Transformer-based mostly large language models (LLMs) have led to major effectiveness advancements throughout a lot of jobs.

These gains appear with a drastic boost in the models’ sizing, possibly top to gradual and highly-priced use at inference time.”

Confident Adaptive Language Modeling (Serene)

Scientists at Google arrived upon an interesting alternative for speeding up the language designs even though also keeping large functionality.

The resolution, to make an analogy, is relatively like the difference among answering an uncomplicated problem and fixing a much more difficult just one.

An uncomplicated problem, like what colour is the sky, can be answered with minor thought.

But a tricky answer demands 1 to stop and assume a little additional to come across the response.

Computationally, big language models do not make a difference between a tricky element of a text technology process and an quick section.

They make text for equally the uncomplicated and tricky areas working with their complete computing power at inference time.

Google’s option is termed Confident Adaptive Language Modeling (Tranquil).

What this new framework does is to dedicate fewer sources to trivial portions of a text technology endeavor and commit the full electricity for a lot more tough sections.

The research paper on Calm states the issue and option like this:

“Recent improvements in Transformer-based mostly massive language types (LLMs) have led to major overall performance enhancements throughout quite a few jobs.

These gains arrive with a drastic boost in the models’ dimension, probably foremost to sluggish and high-priced use at inference time.

In follow, on the other hand, the series of generations manufactured by LLMs is composed of different concentrations of problems.

Although specific predictions definitely advantage from the models’ total potential, other continuations are much more trivial and can be solved with lowered compute.

…While big types do superior in basic, the identical sum of computation could not be needed for just about every enter to attain similar effectiveness (e.g., based on if the input is easy or difficult).”

What is Google Relaxed and Does it Perform?

Calm operates by dynamically allocating means based on the complexity of the unique component of the process, making use of an algorithm to predict whether anything requirements total or partial assets.

The exploration paper shares that they tested the new system for several all-natural language processing responsibilities (“text summarization, device translation, and question answering”) and discovered that they were in a position to speed up the inference by about a variable of a few (300{5376dfc28cf0a7990a1dde1ec4d231557d3d9e6448247a9e5e61bb9e48b1de73}).

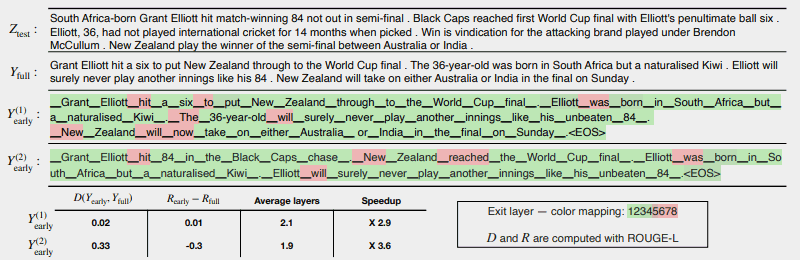

The pursuing illustration displays how properly the Relaxed program will work.

The number of regions in crimson point out wherever the equipment experienced to use its total capability on that part of the activity.

The areas in eco-friendly are wherever the device only utilized considerably less than fifty percent capability.

Purple = Entire Capability/Environmentally friendly = Significantly less Than 50 percent Ability

This is what the exploration paper suggests about the earlier mentioned illustration:

“CALM accelerates the era by early exiting when attainable, and selectively making use of the whole decoder’s ability only for few tokens, shown below on a CNN/DM instance with softmax-primarily based self confidence measure. Y (1) early and Y (2) early use various self confidence thresholds for early exiting.

Bellow (sic) the textual content, we report the calculated textual and risk regularity of just about every of the two outputs, along with effectiveness gains.

The shades symbolize the number of decoding layers utilised for each and every token—light eco-friendly shades point out fewer than fifty percent of the overall levels.

Only a number of chosen tokens use the complete capacity of the model (coloured in crimson), though for most tokens the model exits following a person or handful of decoding levels (colored in eco-friendly).”

The researchers concluded the paper by noting that applying Calm involves only small modifications in order to adapt a large language model to develop into more rapidly.

This study is crucial simply because it opens the doorway to building a lot more elaborate AI models that are trained on substantially much larger knowledge sets with out experiencing slower velocity though retaining a high performance stage.

But it may be achievable that this system can also reward massive language types that are trained on fewer information as perfectly.

For instance, InstructGPT types, of which ChatGPT is a sibling model, are experienced on roughly 1.3 billion parameters but are continue to able to outperform styles that are trained on significantly additional parameters.

The researchers mentioned in the summary:

“Overall, our comprehensive adaptive compute framework for LMs necessitates nominal modifications to the underlying model and allows efficiency gains even though fulfilling rigorous top quality assures for the output.”

This information about this investigate paper was just posted on Google’s AI site on December 16, 2022. The analysis paper by itself is dated October 25, 2022.

It will be attention-grabbing to see if this technological know-how tends to make it way into massive language types of the around potential.

Study Google’s blog site publish:

Accelerating Text Era with Self-assured Adaptive Language Modeling (Relaxed)

Browse the Investigate Paper:

Self-confident Adaptive Language Modeling (PDF)

Highlighted image by Shutterstock/Learn1305